Development on the HANDi Hand began in the summer of 2014 as the need for an inexpensive and sensorized multi-articulated hand became apparent in the BLINC Lab. Originally conceived as a hand to use with the Bento Arm the HANDi Hand has since evolved into its own full-blown project. The original inspiration for the finger mechanism came from the inMoov hand, but the design has substantially deviated and improved through the process of developing two functional prototypes. In the summer of 2017, we built our 3rd prototype in which we implemented an alternate finger drive mechanism using zip-ties.

We are pleased to announce that the HANDi Hand has now been released open source on GitHub. This release includes both the 3D printable hardware as well as the core Arduino scripts for running and testing it. Integration of the HANDi Hand with the brachI/Oplexus software is planned for Winter 2018 and will allow for visualization of sensors and updating of hand parameters through the graphical user interface.

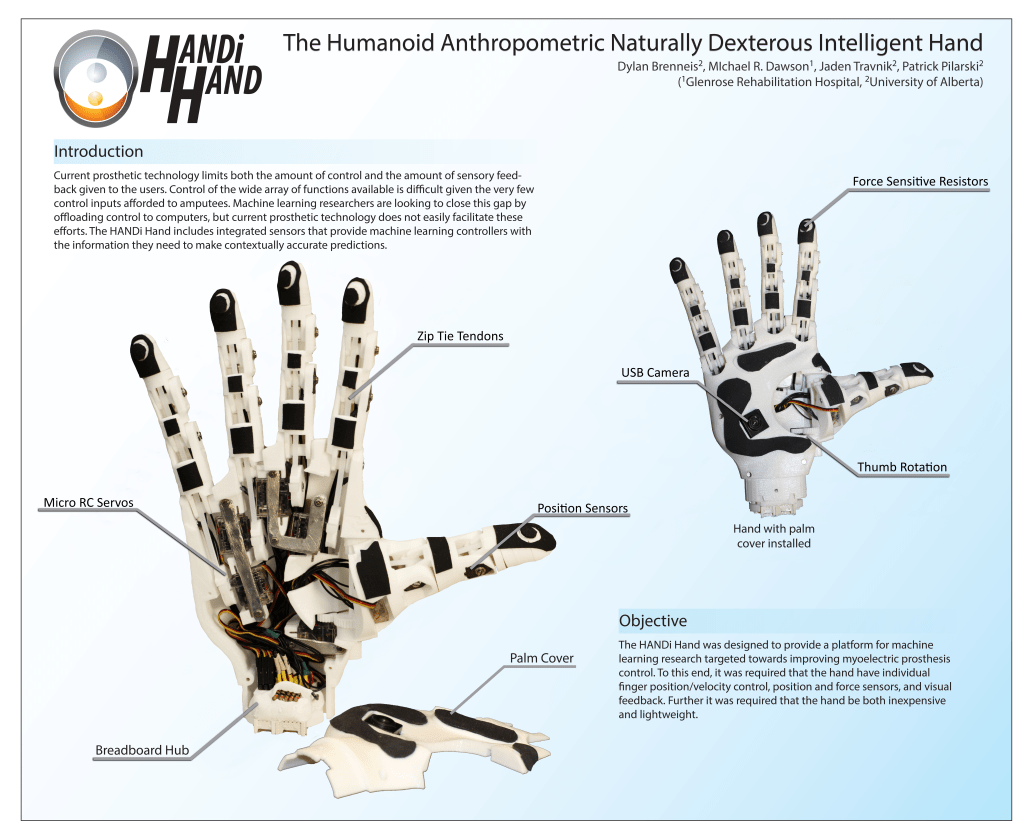

The HANDi Hand is comprised of six Hitec HS-35HD radio controlled servomotors and custom 3D printed parts. The 3D printed parts have been designed to print on the commonly available RepRap 3D printers in the PLA material. Potentiometers are integrated into the knuckles of the fingers to give position and velocity sense and force sensitive resistors are embedded into the fingertips to give contact and grip pressure. A USB camera has been included in the palm to help provide context sensitive information of the objects being grasped to machine learning controllers.

The HANDi Hand can be controlled using any microcontroller board that has PWM outputs in the 0-5V range such as the Arduino microcontroller development board. For our initial testing, we have been using an Arduino Mega controller and custom softwares that include mapping functionality to allow for control interfaces such as joysticks or muscle signals to be easily mapped to the finger movements of the hand. In the future, we would like to develop a fully integrated palm controller.

For more information about the software and arms that are compatible with the HANDi Hand please see our BLINCdev development guide.

Development Paper:

- D. J. A. Brenneis, M. R. Dawson, P. M. Pilarski, “Development of the HANDi Hand: An Inexpensive, Multi-Articulating, Sensorized Hand for Machine Learning Research in Myoelectric Control,” Proc. of MEC’17: Myoelectric Controls Symposium, Fredericton, New Brunswick, August 15-18, 2017.

Related Publications:

Acknowledgements:

We would like to thank the Alberta Machine Intelligence Institute (Amii) and the University of Alberta for their continued support in this project.